June 11, 2010This post is part of what I hope will be a multi-part tutorial/cookbook series on using c2hs (Hackage).

- The Haskell Preprocessor Hierarchy (this post)

- Setting up Cabal, the FFI and c2hs

- Principles of FFI API design

- First steps in c2hs

- Marshalling with get an set

- Call and fun: marshalling redux

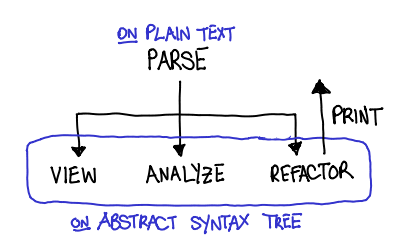

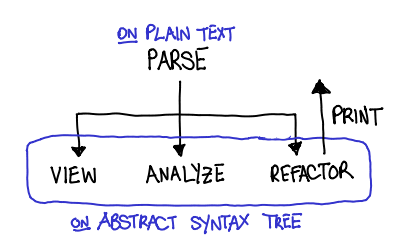

What’s c2hs? c2hs is a Haskell preprocessor to help people generate foreign-function interface bindings, along with hsc2hs and GreenCard. The below diagram illustrates how the preprocessors currently supported by Cabal fit together. (For the curious, Cpp is thrown in with the rest of the FFI preprocessors, not because it is particularly useful for generating FFI code, but because many of the FFI preprocessors also implement some set of Cpp’s functionality. I decided on an order for Alex and Happy on the grounds that Alex was a lexer generator, while Happy was a parser generator.)

What does c2hs do? Before I tell you what c2hs does, let me tell you what it does not do: it does not magically eliminate your need to understand the FFI specification. In fact, it will probably let you to write bigger and more ambitious bindings, which in turn will test your knowledge of the FFI. (More on this later.)

What c2hs does help to do is eliminate some of the drudgery involved with writing FFI bindings. (At this point, the veterans who’ve written FFI bindings by hand are nodding knowingly.) Here are some of the things that you will not have to do anymore:

- Port enum definitions into pure Haskell code (this would have meant writing out the data definition as well as the Enum instance),

- Manually compute the sizes of structures you are marshalling to and from,

- Manually compute the offsets to peek and poke at fields in structures (and deal with the corresponding portability headaches),

- Manually write types for C pointers,

- (To some extent) writing the actual

foreign import declarations for C functions you want to use

When should I use c2hs? There are many Haskell pre-processors; which one should you use? A short (and somewhat inaccurate) way to characterize the above hierarchy is the further down you go, the less boilerplate you have to write and the more documentation you have to read; I have thus heard advice that hsc2hs is what you should use for small FFI projects, while c2hs is more appropriate for the larger ones.

Things that c2hs supports that hsc2hs does not:

- Automatic generation of

foreign import based on the contents of the C header file, - Semi-automatic marshalling to and from function calls, and

- Translation of pointer types and hierarchies into Haskell types.

Things that GreenCard supports and c2hs does not:

- Automatic generation of

foreign import based on the Haskell type signature (indeed, this is a major philosophical difference), - A more comprehensive marshalling language,

- Automatic generation of data marshalling using Data Interface schemes.

Additionally, hsc2hs and c2hs are considered quite mature; the former is packaged with GHC, and (a subset of) the latter is used in gtk2hs, arguably the largest FFI binding in Haskell. GreenCard is a little more, well, green, but it recently received a refresh and is looking quite promising.

Is this tutorial series for me? Fortunately, I’m not going to assume too much knowledge about the FFI (I certainly didn’t have as comprehensive knowledge about it going in than I do coming out); however, some understanding of C will be assumed in the coming tutorials. In particular, you should understand the standard idioms for passing data to and out of C functions and feel comfortable tangling with pointers (though there might be a brief review there too).

Next time. Setting up Cabal, the FFI and c2hs.

June 9, 2010Bjarne Stroustrup once boasted, “C++ is a multi-paradigm programming language that supports Object-Oriented and other useful styles of programming. If what you are looking for is something that forces you to do things in exactly one way, C++ isn’t it.” But as Taras Glek wryly notes, most of the static analysis and coding standards for C++ are mostly to make sure developers don’t use the other paradigms.

On Tuesday, Taras Glek presented Large-Scale Static Analysis at Mozilla. You can watch the video at Galois’s video stream. The guiding topic of the talk is pretty straightforward: how does Mozilla use static analysis to manage the millions of lines of code in C++ and JavaScript that it has? But there was another underlying topic: static analysis is not just for the formal-methods people and the PhDs; anyone can and should tap into the power afforded by static analysis.

Since Mozilla is a C++ shop, the talk was focused on tools built on top of the C++ language. However, there were four parts to static analysis that Taras discussed, which are broadly applicable to any language you might perform static analysis in:

- Parsing. How do you convert a text file into an abstract representation of the source code (AST)?

- Semantic grep. How do you ask the AST for information?

- Analysis. How do you restrict valid ASTs?

- Refactoring. How do you change the AST and write it back?

Parsing. Parsing C++ is hard. Historically this is the case because it inherited a lot of syntax from C; technically this is the case because it is an extremely ambiguous grammar that requires semantic knowledge to disambiguate. Taras used Elsa (which can parse all of Mozilla, after Taras fixed a bunch of bugs), and awaits eagerly awaits Clang to stabilize (as it doesn’t parse all of Mozilla yet.) And of course, the addition of the plugin interface to GCC 4.5 means that you can take advantage of GCC’s parsing capabilities, and many of the later stage tools are built upon that.

Semantic grep. grep is so 70’s! If you’re lucky, the maintainers of your codebase took care to follow the idiosyncratic rules that make code “more greppable”, but otherwise you’ll grep for an identifier and get pages of results and miss the needle. If you have an in-memory representation of your source, you can intelligently ask for information. Taken further, this means better code browsing like DXR.

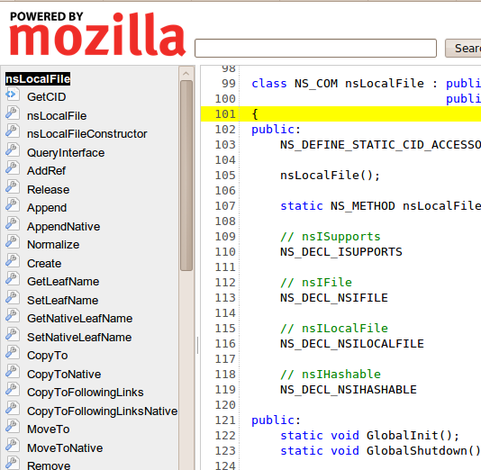

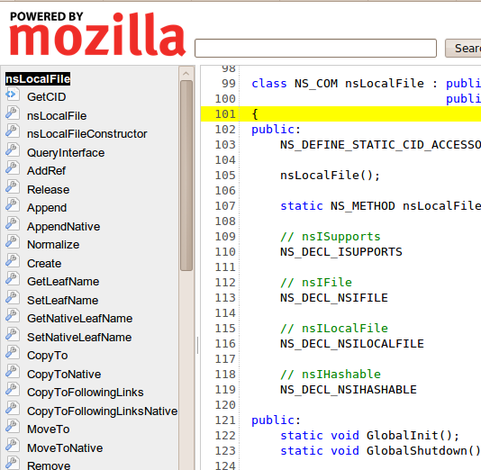

Consider the following source code view. It’s what you’d expect from a source code browser: source on the right, declarations on the left.

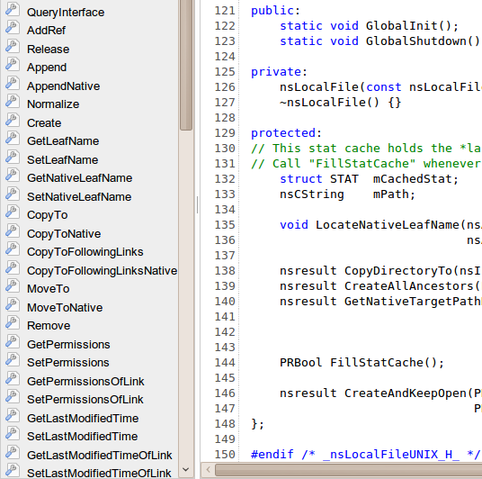

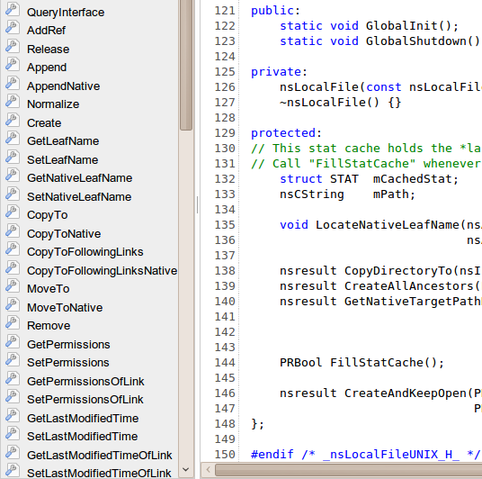

Let’s pick an identifier from the left, let’s say CopyTo. Let’s first scroll through the source code and see if we can pick it out.

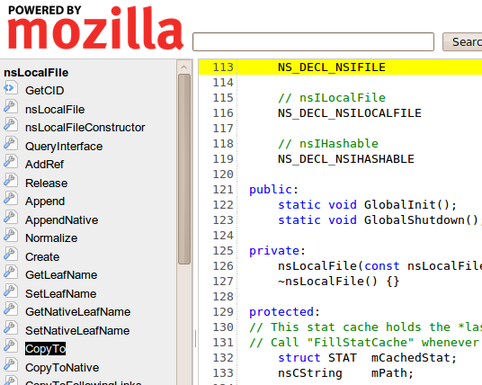

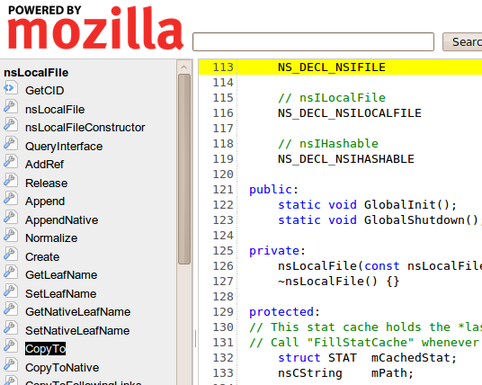

Huh! That was a really short class, and it was no where to be found. Well, let’s try clicking it.

Aha! It was in the macro definition. The tool can be smarter than the untrained eye.

Analysis. For me, this was really the flesh of the talk. Mozilla has a very pimped out build process; hooking into GCC with Dehydra and Treehydra. The idea behind the Dehydra project is to take the internal structures that GCC provides to plugins, and convert them into a JSON-like structure (JSON-like because JSON is acyclic but these structures are cyclic) that Dehydra scripts, which are written in JavaScript can be run on the result. These scripts can emit errors and warnings, which look just like GCC build errors and warnings. Treehydra is an advanced version of Dehydra, that affords more flexibility to the analysis script writer.

So, what makes Dehydra/Treehydra interesting?

- JavaScript. GCC’s plugin interface natively supports C code, which may be intimidating to developers with no static analysis experience. Porting these structures to JavaScript means that you get a high-level language to play in, and also lets you tell Junior developers without a lick of static analysis experience, “It’s just like hacking on a web application.” It means that you can just print out the JSON-like structure, and eyeball the resulting structure for the data you’re looking for; it means when your code crashes you get nice backtraces. Just like Firefox’s plugin interface, Dehydra brings GCC extensions to the masses.

- Language duct tape. I took a jab at Stroustrup at the beginning of his post, and this is why. We can bolt on extra language features like

final for classes (with attributes, __attribute__((user("NS_final"))) wrapped up in a macro NS_FINAL_CLASS) and other restrictions that plain C++ doesn’t give you. - Power when you need it. Dehydra is a simplified interface suitable for people without static analysis or compiler background; Treehydra is full-strength, intended for people with such backgrounds and can let you do things like control-flow analysis.

All of this is transparently integrated into the build system, so developers don’t have to fumble with an external tool to get these analyses.

Refactoring. Perhaps the most ambitious of them all, Taras discussed refactoring beyond the dinky Extract method that Java IDEs like Eclipse give you using Pork. The kind of refactoring like “rewrite all of Mozilla to use garbage collection instead of reference counting.” When you have an active codebase like Mozilla’s, you don’t have the luxury to do a “stop all development and start refactoring…for six years” style refactoring. Branches pose similar problems; once the major refactoring lands on one branch, it’s difficult to keep the branch up-to-date with the other, and inevitably one branch kills off the other.

The trick is an automated refactoring tool, because it lets you treat the refactoring as “just another patch”, and continually rebuild the branch from trunk, applying your custom patches and running the refactoring tool to generate a multimegabyte patch to apply in the stack.

Refactoring C++ is hard, because developers don’t just write C++; they write a combination of C++ and CPP (C pre-processor). Refactoring tools need to be able to reconstruct the CPP macros when writing back out, as opposed to languages like ML which can get away with just pretty-printing their AST. Techniques include pretty-printing as little as possible, and forcing the parser to give you a log of all of the pre-processor changes it made.

Open source. Taras left us with some words about open-source collaboration, that at least the SIPB crowd should be well aware of. Don’t treat tools you depend on black boxes: they’re open-source! If you find a bug in GCC, don’t just work around it, check out the source, write a patch, and submit it upstream. It’s the best way to fix bugs, and you score instant credibility for bugs you might submit later. There is a hierarchy of web applications to browsers, browsers to compilers, compilers to operating systems. The advantage of an open-source ecosystem is that you can read the source all the way down. Use the source, Luke.

June 7, 2010Attention conservation notice. The author reminisces about learning physics in high school, and claims that all too often, teaching was focused too much on concrete formulas, and not the unifying theory around them.

In elementary school, you may have learned D=RT (pronounced “dirt”), that is, distance is rate multiplied with time. This was mostly lies, but it was okay, because however tenuous the equation’s connection to the real world, teachers could use it to introduce the concept of algebraic manipulation, the idea that with just D=RT, you could also find out R if you knew D and T, and T if you knew D and R. Unless you were unusually bright, you didn’t know you were being lied to; you just learned to solve the word problems they’d give you.

Fast forward to high school physics. The lie is still preached, though dressed in slightly different clothes: “Position equals velocity times time,” they say. But then the crucial qualifier would be said: “This equation is for uniform motion.” And then you’d be introduced to your friend uniform acceleration, and there’d be another equation to use, and by the time you’d finish the month-long unit on motion, you’d have a veritable smörgåsbord of equations and variables to keep track of.

CollegeBoard AP Physics continues this fine tradition, as stated by their equation sheet:

$v = v_0 + at$

$x = x_0 + v_0t + \frac12at^2$

With the implicit expectation that the student knows what each of these equations means, and also the inadvertent effect of training students to pattern match when an equation is appropriate and which values go to which variables.

I much prefer this formulation:

$v = \int a\ dt$

$x = \int v\ dt$

With these two equations, I tap into calculus, and reach the very heart of the relationship between position, velocity and acceleration: one is merely the derivative of the previous. These equations are fully general (not only do they work for non-uniform motion, they work on arbitrary-dimensional vectors too), compact and elegant. They’re also not immediately useful from a calculational standpoint.

Is one more valuable than the other? They are good for different things: the first set is more likely to help you out if you want to calculate how long it will take for an apple to fall down from a building, neglecting air resistance. But the second set is more likely to help you really understand motion as more than just a set of algebraic manipulations.

I was not taught this until I took the advanced Classical Mechanics class at MIT. For some reason it is considered fashionable to stay stuck in concrete formulas than to teach the underlying theory. AP Physics is even worse: even AP Physics C, which purports to be more analytical, fills its formula sheet with the former set of equations.

Students have spent most of grade school learning how to perform algebraic manipulations. After taking their physics course, they will likely go on to occupations that involve no physics at all, all of that drilling on exercises wasted. They deserve better than to be fed more algebraic manipulations; they deserve to know the elegance and simplicity that is Classical Mechanics.

Postscript. For programmers reading this blog, feel free to draw your own analogies to your craft; I believe that other fields of science have much to say on the subject of abstraction. For prospective MIT students reading this blog, you may have heard rumors that 8.012 and 8.022 are hard. This is true; but what it is also true is that this pair of classes have seduced many undergraduates into the physics department. I cannot recommend the pair of classes more highly.

June 4, 2010Update. The video of the talk can be found here: Galois Tech Talks on Vimeo: Categories are Databases.

On Thursday Dr. David Spivak presented Categories are Databases as a Galois tech talk. His slides are here, and are dramatically more accessible than the paper Simplicial databases. Here is a short attempt to introduce this idea to people who only have a passing knowledge of category theory.

An essential exercise when designing relational databases is the practice of object modeling using labeled graphs of objects and relationships. Visually, this involves drawing a bunch of boxes representing the objects being modeled, and then drawing arrows between the objects showing relationships they may have. We can then use this object model as the basis for a relational database schema.

An example model from a software engineering class is below:

With the image of a object model in your head, consider Wikipedia’s definition of a category:

In mathematics, a category is an algebraic structure consisting of a collection of “objects”, linked together by a collection of “arrows” that have two basic properties: the ability to compose the arrows associatively and the existence of an identity arrow for each object.

The rest of the definition may seem terribly abstract, but hopefully the bolded section seems to clearly correspond to the picture of boxes (objects) and arrows we drew earlier. Perhaps…

Database schema = Category.

Unfortunately, a directed graph is not quite a category; the secret sauce that makes a category a category are those two properties on the arrows, associative composition and identity, and if we really want to strengthen our claim that a schema is a category, we’ll need to demonstrate these.

Recall that our arrows are “relations,” that is, “X occupies Y” or “X is the key for Y”. Our category must have an identity arrow, that is, some relation “X to X”. How about, “X is itself X”, an almost vacuous statement, but one most certainly true. Identity arrow, check.

We also need to show associative composition of arrows. Composition of two arrows is much like they showed you when they were teaching you vector algebra: you take the head of one arrow (X to Y) and smush it with the tail of another (Y to Z), and you get another arrow (X to Z). If a “book has an author” and “an author has a favorite color”, I can say “the book’s author has a favorite color”. This composed statement doesn’t care who the author was… just what his favorite color is. In fact,

Arrow composition = Joins

That is, one of the fundamental features of a category, a feature that any nice result from pure category theory uses as if it were intuitively obvious, is one of the very techniques that does not seem obvious to someone reading about JOINs in the second half of a database tutorial.

(Aside. A foreign key relationship is intrinsically many to one: a foreign key field can only point to one record in another table, but many rows can have that field pointing to the same record. When doing relational modeling, we will frequently use many-to-many or one-to-many relationships. Any database administrator knows, however, that we can simply rewrite these into many to one relationships (reversing the arrow in the case of one-to-many and introducing a new table for many-to-many).)

When we have a schema, we also want to have data to fill the schema. As it turns out, this also fits into a category-theoretic framework too, although a full explanation is out of scope for this post (I suggest consulting the slides.)

Why do you care? There are some good reasons mentioned by Spivak:

I’ll mention one of my own: SQL, while messy, is precise; it can be fed into a computer and turned into a databases that can do real work. On the other hand, relational models are high level but kind of mushy; developers may complain that drawing diagrams with arrows doesn’t seem terribly rigorous and that the formalism doesn’t really help them much.

Category theory is precise; it unambiguously assigns meaning and structure to the relations, the laws of composition define what relations are and aren’t permissible. Category theory is not only about arrows (if it was it’d be pretty boring); rather, it has a rich body of results from many fields expressed in a common language that can “translated” into database-speak. In many cases, important category theory notions are tricky techniques in database administrator folklore. When you talk about arrows, you’re talking a lot more than arrows. That’s powerful!

June 2, 2010Git is very careful about your files: unless you tell it to be explicitly destructive, it will refuse to write over files that it doesn’t know about, instead giving an error like:

Untracked working tree file ‘foobar’ would be overwritten by merge.

In my work with Wizard, I frequently need to perform merges on working copies that have been, well, “less than well maintained”, e.g. they untarred a new version of the the source tree on the old directory and forgot to add the newly added files. When Wizard goes in and tries to automatically upgrade them to the new version the proper way, this results in all sorts of untracked working tree file complaints, and then we have to go and manually check on the untracked files and remove them once they’re fine.

There is a simple workaround for this: while we don’t want to add all untracked files to the Git repository, we could add just the files that would be clobbered. Git will then stop complaining about the files, and we will still have records of them in the history:

def get_file_set(rev):

return set(shell.eval("git", "ls-tree", "-r", "--name-only", rev).split("\n"))

old_files = get_file_set("HEAD")

new_files = get_file_set(self.version)

added_files = new_files - old_files

for f in added_files:

if os.path.lexists(f): # *

shell.call("git", "add", f)

Previously, the starred line of code read if os.path.exists(f). Can you guess why this was buggy? Recall the difference betweeen exists and lexists; if the file in question is a symlink, exists will follow it, while lexists will not. So, if a file to be clobbered is a broken symlink, the old version of the code would not have removed it. In many case, you can’t distinguish between the cases: if the parent directory of the file that the symlink points to exists, I can create a file via the symlink, and other normal “file operations.”

However, Git is very keenly aware of the difference between a symlink and a file and will complain accordingly if it would have clobbered a symlink. Good ole information preservation!

Postscript. Yesterday was my first day of work at Galois! It was so exciting that I couldn’t get my wits together to write a blog post about it. More to come.

May 31, 2010Conservation attention notice. What definitions from the Haskell 98 Prelude tend to get hidden? I informally take a go over the Prelude and mention some candidates.

(.) in the Prelude is function composition, that is, (b -> c) -> (a -> b) -> a -> c. But the denizens of #haskell know it can be much more than that: the function a -> b is really just the functor, so a more general type is Functor f => (b -> c) -> f b -> f c, i.e. fmap. Even more generally, (.) can indicate morphism composition, as it does in Control.Category.

all, and, any, concat, concatMap, elem, foldl, foldl1, foldr, foldr1, mapM_, maximum, minimum, or, product, sequence_. These are all functions that operate on lists, that easily generalize to the Foldable type class; just replace [a] with Foldable t => t a. They can be found in Data.Foldable.

mapM, sequence. These functions generalize to the Traversable type class. They can be found in Data.Traversable.

Any numeric function or type class. Thurston, Thielemann and Johansson wrote numeric-prelude, which dramatically reorganized the hierarchy of numeric classes and generally moved things much closer to their mathematical roots. While dubbed experimental, it’s seen airplay in more mathematics oriented Haskell modules such as Yorgey’s species package.

Any list function. Many data structures look and smell like lists, and support some set of the operations analogous to those on lists. Most modules rely on naming convention, and as a result, list-like constructs like vectors, streams, bytestrings and others ask you to import themselves qualified. However, there is Data.ListLike which attempts to encode similarities between these. Prelude.Listless offers a version of the Prelude minus list functions.

Monad, Functor. It is widely believed that Monad should probably be an instance of Applicative (and the category theorists might also have you insert Pointed functors in the hierarchy too.) The Other Prelude contains this other organization, although it is cumbersome to use in practice since the new class means most existing monad libraries are not usable.

repeat, until. There is an admittedly oddball generalization for these two functions in Control.Monad.HT. In particular, repeat generalizes the identity monad (explicit (un)wrapping necessary), and until generalizes the (->) a monad.

map. It’s fmap for lists.

zip, zipWith, zipWith3, unzip. Conal’s Data.Zip generalize zipping into the Zip type class.

IO. By far you’ll see the most variation here, with a multitude of modules working on many different levels to give extra functionality. (Unfortunately, they’re not really composable…)

- System.IO.Encoding makes the IO functions encoding aware, and uses implicit parameters to allow for a “default encoding.” Relatedly, System.UTF8IO exports functions just for UTF-8.

- System.IO.Jail lets you force input-output to only take place on whitelisted directories and/or handles.

- System.IO.Strict gives strict versions of IO functions, so you don’t have to worry about running out of file handles.

- System.Path.IO, while not quite IO per se, provides typesafe filename manipulation and IO functions to use those types accordingly.

- System.IO.SaferFileHandles allows handles to be used with monadic regions, and parametrizes handles on the IO mode they were opened with. System.IO.ExplicitIOModes just handles IOMode.

May 28, 2010Attention conservation notice. Equivalent Haskell and Python programs are presented for retrieving values from a data structure using state. We then refactor the Haskell program into one that has no state, just a monoid.

A pretty frequent thing a working programmer needs to do is extract some values (frequently more than one) from some data structure, possibly while keeping track of extra metadata. I found myself writing this code the other day:

getPublicNames :: Module -> State (Map Name (Set ModuleName)) ()

getPublicNames (Module _ m _ _ (Just exports) _ _) = mapM_ handleExport exports

where handleExport x = case x of

EVar (UnQual n) -> add n

EAbs (UnQual n) -> add n

EThingAll (UnQual n) -> add n

EThingWith (UnQual n) cs -> add n >> mapM_ handleCName cs

_ -> return ()

handleCName x = case x of

VarName n -> add n

ConName n -> add n

add n = modify (Map.insertWith Set.union n (Set.singleton m))

getPublicNames _ = return ()

Briefly, getPublicNames traverses the Module data structure looking for “public names”, and every time it finds a name, it inserts it records that the current module contained that name. This lets me efficiently ask the question, “How many (and which) modules use FOO name?”

A transcription in Python might look like:

def getPublicNames(module, ret=None):

if not ret:

ret = defaultdict(set)

if module.exports is None:

return ret

for export in module.exports:

if isinstance(export, EVar) or \

isinstance(export, EAbs) or \

isinstance(export, EThingAll):

ret[export.name].add(module.name)

elif isinstance(export, EThingWith):

ret[export.name].add(module.name)

for cname in export.cnames:

ret[export.name].add(cname.name)

return ret

There a number of cosmetic differences between these two versions:

- The Python version takes in pre-existing state optionally; otherwise it does the initialization and is referentially transparent. The Haskell version has no such notion of default state; we trust that the user can run the state monad with a simple

runState. - The Python version takes advantage of duck-typing to reduce code; I’ve also played fast and loose with the hypothetical object-oriented equivalent data structure.

- The Python version doesn’t have it’s code separated into

handleExport and handleCname, although we certainly could have with a few more inline functions.

But other than that, they pretty much read and operate precisely the same way, by mutating state. The Python version is also pretty much the end of the road; besides pushing the functions into their member objects, I believe there is no more “Pythonic” way to do it. The Haskell version is making me itchy though…

We’re never reading out state! This is a tell-tale sign that we should be using a Writer monad, not a State monad. There is a slight technical difficulty, though: Writer requires that the value being “logged” is a Monoid, and while, in theory, Map k (Set a) certainly has a a Monoid instance that does what we mean, the general Monoid instance for Map k v doesn’t cut it. Recall that a monoid describes data that I can “append” together to form another version of that data. For a SetMap,

- We want a monoid instance that takes two

SetMap structures and and unions the map, resolving duplicate by unioning those sets. - By default, we get a monoid instance that takes two

Map structures and unions the map, preferring the original value when a conflict occurs and discarding the rest.

Newtype to the rescue. A newtype is in order. We’ll call it SetMap. The recipe to follow for cooking up the newtype is as follows:

First, you need a newtype declaration. Explicitly naming the field in record syntax as unDataType is idiomatic, and invokes “unwrapping” the newtype wrapper from the object:

newtype SetMap k v = SetMap { unSetMap :: Map k (Set v) }

Next, you write the special type class instances you are interested in. (And possibly use deriving ... to pull in any old, default instances that are still good).

instance (Ord k, Ord v) => Monoid (SetMap k v) where

mempty = SetMap Map.empty

mappend (SetMap a) (SetMap b) = SetMap $ Map.unionWith Set.union a b

mconcat = SetMap . Map.unionsWith Set.union . map unSetMap

Perhaps some helper functions are in order:

setMapSingleton :: (Ord k, Ord v) => k -> v -> SetMap k v

setMapSingleton k v = SetMap $ Map.singleton k (Set.singleton v)

And voila!

getPublicNames :: Module -> Writer (SetMap Name ModuleName) ()

getPublicNames (Module _ m _ _ (Just exports) _ _) = mapM_ handleExport exports

where handleExport x = case x of

EVar (UnQual n) -> add n

EAbs (UnQual n) -> add n

EThingAll (UnQual n) -> add n

EThingWith (UnQual n) cs -> add n >> mapM_ handleCName cs

_ -> return ()

handleCName x = case x of

VarName n -> add n

ConName n -> add n

add n = tell (setMapSingleton n m) -- *

getPublicNames _ = return ()

Wait, we made our code more specific, and somehow it got longer! Perhaps, gentle reader, you might be slightly reassured by the fact that the new SetMap support code, which forms the bulk of what we wrote, is highly general and reusable, and, excluding that code, we’ve slightly reduced the code from add n = modify (Map.insertWith Set.union n (Set.singleton m)) to add n = tell (setMapSingleton n m).

Perhaps more importantly, we’ve now indicated to an enduser a new contract for this function: we will only ever write values out, and not change them.

Why were we using the monad again? Closer inspection further reveals that we’re never using bind (>>=). In fact, we’re not really using any of the power of a monad. Let’s make our code even more specific:

-- This operator is going into base soon, I swear!

(<>) = mappend

getPublicNames :: Module -> SetMap Name ModuleName

getPublicNames (Module _ m _ _ (Just exports) _ _) = foldMap handleExport exports

where handleExport x = case x of

EVar (UnQual n) -> make n

EAbs (UnQual n) -> make n

EThingAll (UnQual n) -> make n

EThingWith (UnQual n) cs -> make n <> foldMap handleCName cs

_ -> mempty

handleCName x = case x of

VarName n -> make n

ConName n -> make n

make n = setMapSingleton n m

getPublicNames _ = mempty

There’s not much of a space change, but users of this function now no longer need to execWriter; they can use the output right off the back (although they might need to unpack it eventually with unSetMap.

Technically, we never needed the monoid. In particular, setMapSingleton is forcing our code to cater to SetMap, and not Monoids in general (that wouldn’t really make any sense, either. Perhaps the notion of a “Pointed” Monoid would be useful). So we could have just written out all of our functions explicitly; more likely, we could have defined another set of helper functions to keep code size down. But you should still use the monoid. Monoids act certain ways (e.g. the monoid laws) and have a canonical set of functions that operate on them. By using those functions, you allow other people who have worked with monoids to quickly reason about your code, even if they’re not familiar with your specific monoid.

Postscript. I refactored real code while writing this blog post; none of the examples were contrived. I was originally planning on writing about “You ain’t gonna need it” and Haskell abstractions, but fleshing out this example ended up being a bit longer than I expected. Maybe next time…

Post-postscript. Anders Kaseorg writes in to mention that SetMap has been implemented in several places (Criterion.MultiMap, Holumbus.Data.MultiMap), but it hasn’t been put in a particularly general library.

May 26, 2010One of the papers I’ve been slowly rereading since summer began is “Functional Programming with Bananas, Lenses, Envelopes and Barbed Wire”, by Erik Meijer, Maarten Fokkinga and Ross Paterson. If you want to know what {cata,ana,hylo,para}morphisms are, this is the paper to read: section 2 gives a highly readable formulation of these morphisms for the beloved linked list.

Last time, however, my eyes got a little bit glassy when they started discussing algebraic data types, despite having used and defined them in Haskell; part of me felt inundated in a sea of triangles, circles and squiggles, and by the time they reached the laws for the basic combinators, I might as well have said, “It’s all math to me!”

A closer reading revealed that, actually, all of these algebraic operators can be written out in plain Haskell, and for someone who has been working with Haskell for a little bit of time, this can provide a smoother (albeit more verbose) reading. Thus, I present this translation guide.

Type operators. By convention, types are $A, B, C\ldots$ on the left and a, b, c... on the right. We distinguish these from function operators, though the paper does not and relies on convention to distinguish between the two.

$A \dagger B \Leftrightarrow$ Bifunctor t => t a b

$A_F \Leftrightarrow$ Functor f => f a

$A* \Leftrightarrow$ [a]

$D \parallel D' \Leftrightarrow$ (d, d')

$D\ |\ D' \Leftrightarrow$ Either d d'

$_I \Leftrightarrow$ Identity

$\underline{D} \Leftrightarrow$ Const d

$A_{(FG)} \Leftrightarrow$ (Functor f, Functor g) => g (f a)

$A_{(F\dagger G)} \Leftrightarrow$ (Bifunctor t, Functor f, Functor g) => Lift t f g a

$\boldsymbol{1} \Leftrightarrow$ ()

(For the pedantic, you need to add Hask Hask Hask to the end of all the Bifunctors.)

Function operators. By convention, functions are $f, g, h\ldots$ on the left and f :: a -> b, g :: a' -> b', h... on the right (with types unified as appropriate).

$f \dagger g \Leftrightarrow$ bimap f g :: Bifunctor t => t a a' -> t b b'

$f_F \Leftrightarrow$ fmap f :: Functor f => f a -> f b

$f \parallel g \Leftrightarrow$ f *** g :: (a, a') -> (b, b')

where f *** g = \(x, x') -> (f x, g x')

$\grave{\pi} \Leftrightarrow$ fst :: (a, b) -> a

$\acute{\pi} \Leftrightarrow$ snd :: (a, b) -> b

$f \vartriangle g \Leftrightarrow$ f &&& g :: a -> (b, b') -- a = a'

where f &&& g = \x -> (f x, g x)

$\Delta x \Leftrightarrow$ double :: a -> (a, a)

where double x = (x, x)

$f\ |\ g \Leftrightarrow$ asum f g :: Either a a' -> Either b b'

where asum f g (Left x) = Left (f x)

asum f g (Right y) = Right (g y)

$\grave{\i} \Leftrightarrow$ Left :: a -> Either a b

$\acute{\i} \Leftrightarrow$ Right :: b -> Either a b

$f\ \triangledown\ g \Leftrightarrow$ either f g :: Either a a' -> b -- b = b'

$\nabla x \Leftrightarrow$ extract x :: a

where extract (Left x) = x

extract (Right x) = x

$f \rightarrow g \Leftrightarrow$ (f --> g) h = g . h . f

(-->) :: (a' -> a) -> (b -> b') -> (a -> b) -> a' -> b'

$g \leftarrow f \Leftrightarrow$ (g <-- f) h = g . h . f

(<--) :: (b -> b') -> (a' -> a) -> (a -> b) -> a' -> b'

$(f \overset{F}{\leftarrow} g) \Leftrightarrow$ (g <-*- f) h = g . fmap h . f

(<-*-) :: Functor f => (f b -> b') -> (a' -> f a) -> (a -> b) -> a' -> b'

$f_I \Leftrightarrow$ id f :: a -> b

$f\underline{D} \Leftrightarrow$ const id f :: a -> a

$x_{(FG)} \Leftrightarrow$ (fmap . fmap) x

$VOID \Leftrightarrow$ const ()

$\mu f \Leftrightarrow$ fix f

Now, let’s look at the abides law:

$(f \vartriangle g)\ \triangledown\ (h \vartriangle j) = (f\ \triangledown\ h) \vartriangle (g\ \triangledown\ j)$

Translated into Haskell, this states:

either (f &&& g) (h &&& j) = (either f h) &&& (either g j)

Which (to me at least) makes more sense: if I want to extract a value from Either, and then run two functions on it and return the tuple of results, I can also split the value into a tuple immediately, and extract from the either “twice” with different functions. (Try running the function manually on a Left x and Right y.)

May 24, 2010Consider the following piece of code:

import Prelude hiding (catch)

import Control.Exception

main :: IO ()

main = do

t <- safeCall

unsafeCall t

putStrLn "Done."

safeCall :: IO String

safeCall = do

return alwaysFails `catch` errorHandler

--alwaysFails = throw (ErrorCall "Oh no!")

alwaysFails = error "Oh no!"

errorHandler :: SomeException -> IO String

errorHandler e = do

putStrLn "Caught"

return "Ok."

errorHandler_ e = errorHandler e >> return ()

unsafeCall :: String -> IO ()

unsafeCall = putStrLn

What might you expect the output to be? A straightforward transcription to Python might look like:

def main():

t = safeCall()

unsafeCall(t)

print "Done"

def safeCall():

try:

return alwaysFails()

except:

return errorHandler()

def alwaysFails():

raise Exception("Oh no!")

def errorHandler():

print "Caught."

return "Ok."

def unsafeCall(output):

print output

and anyone with a passing familiarity with the any strict language will say, “Of course, it will output:”

Caught.

Ok.

Done.

Of course, lazy exceptions (which is what error emits) aren’t called lazy for no reason; the Haskell code outputs:

*** Exception: Oh no!

What happened? Haskell was lazy, and didn’t bother evaluating the pure insides of the IO return alwaysFails until it needed it for unsafeCall, at which point there was no more catch call guarding the code. If you don’t believe me, you can add a trace around alwaysFails. You can also try installing errorHandler_ on unsafeCall.

What is the moral of the story? Well, one is that error is evil, but we already knew that…

- You may install exception handlers for most IO-based errors the obvious way. (If we had replaced

return alwaysFails with alwaysFails, the result would have been the strict one.) You may not install exception handlers for errors originating from pure code, since GHC reserves the right to schedule arbitrarily the time when your code is executed. - If pure code is emitting exceptions and you would like it to stop doing that, you’ll probably need to force strictness with

$! deepseq or rnf, which will force GHC to perform the computation inside your guarded area. As my readers point out, a good way to think about this is that the call is not what is exceptional, the structure is. - If you are getting an imprecise exception from pure code, but can’t figure out where, good luck! I don’t have a good recipe for figuring this out yet. (Nudge to my blog readers.)

Postscript. Note that we needed to use Control.Exception.catch. Prelude.catch, as per Haskell98, only catches IO-based errors.

May 21, 2010Now that term is over, I finally went an upgraded my laptop to Ubuntu 10.04 LTS, Lucid Lynx. The process went substantially more smoothly than Karmic went, but there were still a few hiccups.

Etckeeper. As always, you should set AVOID_COMMIT_BEFORE_INSTALL to 0 before attempting a release upgrade, since etckeeper hooks will be invoked multiple times and there’s nothing more annoying than getting the notice “etckeeper aborted the install due to uncommited changes, please commit them yourselves” because there is no way that’s going to work.

Well, this time round there was a different, hilarious bug:

/etc/etckeeper/post-install.d/50vcs-commit: 20:

etckeeper: Argument list too long

This has been reported as Bug #574244. Despite being an ominous warning, it is actually quite harmless, and you can complete the upgrade with:

aptitude update

aptitude full-upgrade

I had to kick the network due to broken DNS; your mileage may vary.

Wireless key management. I have not resolved this issue yet, but the basic symptom is that Ubuntu network-manager fails to remember WEP keys you have provided for secured networks. (I know you MIT students still on campus aren’t too bothered by this.) This appears to be a moderately widespread problems, as you have people revivifying permutations of this bug that occurred a long time ago. (In classic terrible bug reporting style, users are attaching themselves to old bug reports when they really should be filing a new regression for Lucid.)

From what I’ve investigated, I have been able to verify that connections to the keyring daemon are not working. There is a fix circulating in which you change your startup command from “gnome-keyring-daemon –start –components=pkcs11” to just “gnome-keyring-daemon”, although I suspect this isn’t really the “right” thing, and it doesn’t work for me anyway.

PHP. Ubuntu Lucid most notably has upgraded PHP 5.3.2, but they’ve also fiddled with some of the default settings. In my case, log_errors was causing quite interesting behavior for my scripts, and I have since coded my scripts to explicitly turn this ini setting off. You should save a copy of the output of php -i prior to the upgrade and compare it with the output afterwards.