October 25, 2010I’ve started formally learning OCaml (I’ve been reading ML since Okasaki, but I’ve never written any of it), and here are some notes about differences from Haskell from Jason Hickey’s Introduction to Objective Caml. The two most notable differences are that OCaml is impure and strict.

Features. Here are some features OCaml has that Haskell does not:

- OCaml has named parameters (

~x:i binds to i the value of named parameter x, ~x is a shorthand for ~x:x). - OCaml has optional parameters (

?(x:i = default) binds i to an optional named parameter x with default default). - OCaml has open union types (

[> 'Integer of int | 'Real of float] where the type holds the implementation; you can assign it to a type with type 'a number = [> 'Integer of int | 'Real of float] as a). Anonymous closed unions are also allowed ([< 'Integer of int | 'Real of float]). - OCaml has mutable records (preface record field in definition with

mutable, and then use the <- operator to assign values). - OCaml has a module system (only briefly mentioned today).

- OCaml has native objects (not covered in this post).

Syntax. Omission means the relevant language feature works the same way (for example, let f x y = x + y is the same)

Organization:

{- Haskell -}

(* OCaml *)

Types:

() Int Float Char String Bool (capitalized)

unit int float char string bool (lower case)

Operators:

== /= .&. .|. xor shiftL shiftR complement

= == != land lor lxor [la]sl [la]sr lnot

(arithmetic versus logical shift in Haskell depends on the type of the Bits.)

Float operators in OCaml: affix period (i.e. +.)

Float casting:

floor fromIntegral

int_of_float float_of_int

String operators:

++ !!i

^ .[i] (note, string != char list)

Composite types:

(Int, Int) [Bool]

int * int bool list

Lists:

x : [1, 2, 3]

x :: [1; 2; 3]

Data types:

data Tree a = Node a (Tree a) (Tree a) | Leaf

type 'a tree = Node of 'a * 'a tree * 'a tree | Leaf;;

(note that in OCaml you’d need Node (v,l,r) to match, despite there not actually being a tuple)

Records:

data MyRecord = MyRecord { x :: Int, y :: Int }

type myrecord = { x : int; y : int };;

Field access:

x r

r.x

Functional update:

r { x = 2 }

{ r with x = 2 }

(OCaml records also have destructive update.)

Maybe:

data Maybe a = Just a | Nothing

type 'a option = None | Some of 'a;;

Array:

readArray a i writeArray a i v

[|1; 3|] a.(i) a.(i) <- v

References:

newIORef writeIORef readIORef

ref := !

Top level definition:

x = 1

let x = 1;;

Lambda:

\x y -> f y x

fun x y -> f y x

Recursion:

let f x = if x == 0 then 1 else x * f (x-1)

let rec f x = if x == 0 then 1 else x * f (x-1)

Mutual recursion (note that Haskell let is always recursive):

let f x = g x

g x = f x

let rec f x = g x

and g x = f x

Function pattern matching:

let f 0 = 1

f 1 = 2

let f = function

| 0 -> 1

| 1 -> 2

(note: you can put pattern matches in the arguments for OCaml, but lack of an equational function definition style makes this not useful)

Case:

case f x of

0 -> 1

y | y > 5 -> 2

y | y == 1 || y == 2 -> y

_ -> -1

match f x with

| 0 -> 1

| y when y > 5 -> 2

| (1 | 2) as y -> y

| _ -> -1

Exceptions:

Definition

data MyException = MyException String

exception MyException of string;;

Throw exception

throw (MyException "error")

raise (MyException "error")

Catch exception

catch expr $ \e -> case e of

x -> result

try expr with

| x -> result

Assertion

assert (f == 1) expr

assert (f == 1); expr

Undefined/Error:

error "NYI"

failwith "NYI"

Build:

ghc --make file.hs

ocamlopt -o file file.ml

Run:

runghc file.hs

ocaml file.ml

Type signatures. Haskell supports specifying a type signature for an expression using the double colon. OCaml has two ways of specifying types, they can be done inline:

let intEq (x : int) (y : int) : bool = ...

or they can be placed in an interface file (extension mli):

val intEq : int -> int -> bool

The latter method is preferred, and is analogous to an hs-boot file as supported by GHC.

Eta expansion. Polymorphic types in the form of '_a can be thought to behave like Haskell’s monomorphism restriction: they can only be instantiated to one concrete type. However, in Haskell the monomorphism restriction was intended to avoid extra recomputation for values that a user didn’t expect; in OCaml the value restriction is required to preserve the soundness of the type system in the face of side effects, and applies to functions too (just look for the tell-tale '_a in a signature). More fundamentally, 'a indicates a generalized type, while '_a indicates a concrete type which, at this point, is unknown—in Haskell, all type variables are implicitly universally quantified, so the former is always the case (except when the monomorphism restriction kicks in, and even then no type variables are ever shown to you. But OCaml requires monomorphic type variables to not escape from compilation units, so there is a bit of similarity. Did this make no sense? Don’t panic.)

In Haskell, we’d make our monomorphic value polymorphic again by specifying an explicit type signature. In OCaml, we generalize the type by eta expanding. The canonical example is the id function, which when applied to itself (id id) results in a function of type '_a -> '_a (that is, restricted.) We can recover 'a -> 'a by writing fun x -> id id x.

There is one more subtlety to deal with OCaml’s impurity and strictness: eta expansion acts like a thunk, so if the expression you eta expand has side effects, they will be delayed. You can of course write fun () -> expr to simulate a classic thunk.

Tail recursion. In Haskell, you do not have to worry about tail recursion when the computation is lazy; instead you work on putting the computation in a data structure so that the user doesn’t force more of it than they need (guarded recursion), and “stack frames” are happily discarded as you pattern match deeper into the structure. However, if you are implementing something like foldl', which is strict, you’d want to pay attention to this (and not build up a really big thunk.)

Well, OCaml is strict by default, so you always should pay attention to making sure you have tail calls. One interesting place this comes up is in the implementation of map, the naive version of which cannot be tail-call optimized. In Haskell, this is not a problem because our map is lazy and the recursion is hidden away in our cons constructor; in OCaml, there is a trade off between copying the entire list to get TCO, or not copying and potentially exhausting stack space when you get big lists. (Note that a strict map function in Haskell would have the same problem; this is a difference between laziness and strictness, and not Haskell and OCaml.)

File organization. A single file OCaml script contains a list of statements which are executed in order. (There is no main function).

The moral equivalent of Haskell modules are called compilation units in OCaml, with the naming convention of foo.ml (lower case!) corresponding to the Foo module, or Foo.foo referring to the foo function in Foo.

It is considered good practice to write interface files, mli, as described above; these are like export lists. The interface file will also contain data definitions (with the constructors omitted to implement hiding).

By default all modules are automatically “imported” like import qualified Foo (no import list necessary). Traditional import Foo style imports (so that you can use names unqualified) can be done with open Foo in OCaml.

Module system. OCaml does not have type classes but it does have modules and you can achieve fairly similar effects with them. (Another classic way of getting type class style effects is to use objects, but I’m not covering them today.) I was going to talk about this today but this post is getting long so maybe I’ll save it for another day.

Open question. I’m not sure how much of this is OCaml specific, and how much generalizes to all ML languages.

Update. ocamlrun is not the same as runghc; I’ve updated the article accordingly.

Update 2. Raphael Poss has written a nice article in reverse: Haskell for OCaml programmers

October 22, 2010I am a member of a group called the Assassins’ Guild. No, we don’t kill people, and no, we don’t play the game Assassin. Instead, we write and run competitive live action role-playing games: you get some game rules describing the universe, a character sheet with goals, abilities and limitations, and we set you loose for anywhere from four hours to ten days. In this context, I’d like to describe a situation where applying the rule Don’t Repeat Yourself can be harmful.

The principle of Don’t Repeat Yourself comes up in a very interesting way when game writers construct game rules. The game rules are rather complex: we’d like players to be able to do things like perform melee attacks, stab each other in the back, conjure magic, break down doors, and we have to do this all without actually injuring anyone or harming any property, so, in a fashion typical of MIT students, we have “mechanics” for performing these in-game actions (for example, in one set of rules, a melee attack can be declared with “Wound 5”, where 5 is your combat rating, and if another individual has a CR of 5 or greater, they can declare “Resist”; otherwise, they have to role-play falling down unconscious and bleeding. It’s great fun.) Because there are so many rules necessary to construct a reasonable base universe, there is a vanilla, nine-page rules sheet that most gamewriters adapt for their games.

Of course, the rules aren’t always the same. One set of GMs (the people who write and run the game) may decide that a single CR rating is not enough, and that people should have separate attack and defense ratings. Another set of GMs might introduce robotic characters, who cannot die from bleeding out. And so forth.

So when we give rules out to players, we have two possibilities: we can repeat ourselves, and simply give them the full, amended set of rules. Or we can avoid repeating ourselves, and give out the standard rules and a list of errata—the specific changes made in our universe. We tend to repeat ourselves, since it’s easier to do with our game production tools. But an obvious question to ask is, which approach is better?

The answer is, of course, it depends.

- Veteran players who are well acquainted with the standard set of rules don’t need the entire set of rules given to them every time they play a game; instead, it would be much easier and more efficient for them if they were just given the errata sheet, so they can go, “Oh, hm, that’s different, ok” and go and concoct strategies for this altered game universe. This is particularly important for ten-days, where altered universe rules can greatly influence plotting and strategy.

- For new players who have never played a game before, being given a set of rules and then being told, “Oh, but disregard that and that and here is an extra condition for that case” would be very confusing! The full rules, repeated for the first few times they play a game, is helpful.

I think this same principle applies to Don’t Repeat Yourself as applied in software development. It’s good and useful to adopt a compact, unique representation for any particular piece of code or data, but don’t forget that a little bit of redundancy will greatly help out people learning your system for the first time! And to get the best of both worlds, you shouldn’t even have to repeat yourself: you should make the computer do it for you.

Postscript. For the curious, here is a PDF of the game rules we used for a game I wrote in conjunction with Alex Gurany and Jonathan Chapman, The Murder of Jefferson Douglass (working name A Dangerous Game).

Postscript II. When has repeating yourself been considered good design?

- Perl wants programmers to have to say as little as possible to get the job done, and this has given it a reputation as a “write only language.”

- Not all code that looks the same should be refactored into a function; there should be some logical unity to what is factored out.

- Java involves writing copious amounts of code: IDEs generate code for

hashCode and equals, and you possibly tweak it after the fact. Those who like Java controversially claim that this prevents Java programmers from doing too much damage (though some might disagree.) - When you write essays, even if you’ve already defined a term fifty pages ago, it’s good to refresh a reader’s memory. This is especially true for math textbooks.

- Haskell challenges you to abstract as much mathematically sound structure as possible. As a result, it makes people’s heads hurt, leads to combinator zoos up to the wazoo. But it’s also quite beneficial for even moderately advanced users.

Readers are encouraged to come up with more examples.

October 20, 2010In which the author muses that “semi-formal methods” (that is, non computer-assisted proof writing) should take a more active role in allowing software engineers to communicate with one another.

C++0x has a lot of new, whiz-bang features in it, one of which is the atomic operations library. This library has advanced features that enable compiler writers and concurrency library authors to take advantage of a relaxed memory model, resulting in blazingly fast concurrent code.

It’s also ridiculously bitchy to get right.

The Mathematizing C++ Concurrency project at Cambridge is an example of what happens when you throw formal methods at an exceedingly tricky specification: you find bugs. Lots of them, ranging from slight clarifications to substantive changes. As of a talk Mark Batty gave on Monday there are still open problems: for example, the sequential memory model isn’t actually sequential in all cases. You can consult the Pre-Rapperswil paper §4 for more details.

Which brings me to a piercing question:

When software engineers want to convince one another that their software is correct, what do they do?

This particular question is not about proving software “correct”—skeptics rightly point out that in many cases the concept of “correctness” is ill-defined. Instead, I am asking about communication, along the lines of “I have just written an exceptionally tricky piece of code, and I would now like to convince my coworker that I did it properly.” How do we do this?

We don’t.

Certainly there are times when the expense of explaining some particular piece of code is not useful. Maybe the vast majority of code we write is like this. And certainly we have mechanisms for “code review.” But the mostly widely practiced form of code review revolves around the patch and frequently is only productive when the original programmer is still around and still remembers how the code works. Having a reviewer read an entire program has been determined to be a frustrating and inordinately difficult thing to do—so instead, we focus on style and local structure and hope no one writes immaculate evil code. Security researchers may review code and look for patterns of use that developers tend to “get wrong” and zero in on them. We do have holistic standards, but they tend towards “it seems to work,” or, if we’re lucky, “it doesn’t break any automated regression tests.”

What we have is a critical communication failure.

One place to draw inspiration from is that of proof in mathematics. The proof has proven to be an useful tool at communicating mathematical ideas from one person to another, with a certain of rigor to avoid ambiguity and confusion, but not computer-level formality: unlike computer science, mathematicians have only recently begun to formalize proofs for computer consumption. Writing and reading proofs is tough business, but it is the key tool by which knowledge is passed down.

Is a program a proof? In short, yes. But it is a proof of the wrong thing: that is, it precisely specifies what the program will do, but subsequently fails to say anything beyond that (like correctness or performance or any number of other intangible qualities.) And furthermore, it is targeted at the computer, not another person. It is one of the reasons why “the specification of the language is the compiler itself” is such a highly unsatisfying answer.

Even worse, at some point in time you may have had in your head a mental model of how some dark magic worked, having meticulously worked it out and convinced yourself that it worked. And then you wrote // Black magic: don't touch unless you understand all of this! And then you moved on and the knowledge was lost forever, to be rediscovered by some intrepid soul who arduously reread your code and reconstructed your proof. Give them a bone! And if you haven’t even convinced yourself that the code for your critical section will do the right thing, shame on you! (If your code is simple, it should have been a simple proof. If your code is complicated, you probably got it wrong.)

You might argue that this is just the age-old adage “we need more documentation!” But there is a difference: proofs play a fundamentally different role than just documentation. Like programs, they must also be maintained, but their maintenance is not another chore to be done, inessential to the working of your program—rather, it should be considered a critical design exercise for assuring you and your colleagues of that your new feature is theoretically sound. It is stated that good comments say “Why” not “What.” I want to demand rigor now.

Rigor does not mean that a proof needs to be in “Greek letters” (that is, written in formal notation)—after all, such language is frequently off putting to those who have not seen it before. But it’s often a good idea, because formal language can capture ideas much more precisely and succinctly than English can.

Because programs frequently evolve in their scope and requirements (unlike mathematical proofs), we need unusually good abstractions to make sure we can adjust our proofs. Our proofs about higher level protocols should be able to ignore the low level details of any operation. Instead, they should rely on whatever higher level representation each operation has (whether its pre and post-conditions, denotational semantics, predicative semantics, etc). We shouldn’t assume our abstractions work either (nor should we throw up our hands and say “all abstractions are leaky”): we should prove that they have the properties we think they should have (and also say what properties they don’t have too). Of course, they might end up being the wrong properties, as is often the case in evolutionary software, but often, proof can smoke these misconceptions out.

October 18, 2010I’ve been having some vicious fun over the weekend hacking up a little tool called MMR Hammer in Haskell. I won’t bore you with the vagaries of multimaster replication with Fedora Directory Server; instead, I want to talk about rapidly prototyping scripts in Haskell—programs that are characterized by a low amount of computation and a high amount of IO. Using this script as a case study, I’ll describe how I approached the problem, what was easy to do and what took a little more coaxing. In particular, my main arguments are:

- In highly specialized scripts, you can get away with not specifying top-level type signatures,

- The IO monad is the only monad you need, and finally

- You can and should write hackish code in Haskell, and the language will impose just the right amount of rigor to ensure you can clean it up later.

I hope to convince you that Haskell can be a great language for prototyping scripts.

What are the characteristics of rapidly prototyping scripts? There are two primary goals of rapid prototyping: to get it working, and to get it working quickly. There are a confluence of factors that feed into these two basic goals:

- Your requirements are immediately obvious—the problem is an exercise of getting your thoughts into working code. (You might decide later that your requirements are wrong.)

- You have an existing API that you want to use, which let’s you say “I want to set the X property to Y” instead of saying “I will transmit a binary message of this particular format with this data over TCP.” This should map onto your conception of what you want to do.

- You are going to manually test by repeatedly executing the code path you care about. Code that you aren’t developing actively will in general not get run (and may fail to compile, if you have lots of helper functions). Furthermore, running your code should be fast and not involve a long compilation process.

- You want to avoid shaving yaks: solving unrelated problems eats up time and prevents your software from working; better to hack around a problem now.

- Specialization of your code for your specific use-case is good: it makes it easier to use, and gives a specific example of what a future generalization needs to support, if you decide to make your code more widely applicable in the future (which seems to happen to a lot of prototypes.)

- You’re not doing very much computationally expensive work, but your logic is more complicated than is maintainable in a shell script.

What does a language that enables rapid prototyping look like?

- It should be concise, and at the very least, not make you repeat yourself.

- It should “come with batteries,” and at least have the important API you want to use.

- It should be interpreted.

- It should be well used; that is, what you are trying to do should exist somewhere in the union of what other people have already done with the language. This means you are less likely to run into bizarre error conditions in code that no one else runs.

- It should have a fast write-test-debug cycle, at least for small programs.

- The compiler should not get in your way.

General prototyping in Haskell. If we look at our list above, Haskell has several aspects that recommend it. GHC has a runghc command which allows you to interpret your script, which means for quick prototyping. Functional programming encourages high amounts of code reuse, and can be extremely concise when your comfortable with using higher-order functions. And, increasingly, it’s growing a rather large set of batteries. In the case of LDAP MMR, I needed a bindings for the OpenLDAP library, which John Goerzen had already written. A great start.

The compiler should not get in your way. This is perhaps the most obvious problem for any newcomer to Haskell: they try to some pedestrian program and the compiler starts bleating at them with a complex type error, rather than the usual syntax error or runtime error. As they get more acquainted with Haskell, their mental model of Haskell’s type system improves and their ability to fix type errors improves.

The million dollar question, then, is how well do you have to know Haskell to be able to quickly resolve type errors? I argue, in the case of rapid prototyping in Haskell, not much at all!

One simplifying factor is the fact that the functions you write will usually not be polymorphic. Out of the 73 fully implemented functions in MMR Hammer, only six have inferred nontrivial polymorphic type signatures, all but one of these is only used single type context.

For these signatures, a is always String:

Inferred type: lookupKey :: forall a.

[Char] -> [([Char], [a])] -> [a]

Inferred type: lookupKey1 :: forall a.

[Char] -> [([Char], [a])] -> Maybe a

m is always IO, t is always [String] but is polymorphic because it’s not used in the function body:

Inferred type: mungeAgreement :: forall (m :: * -> *).

(Monad m) =>

LDAPEntry -> m LDAPEntry

Inferred type: replicaConfigPredicate :: forall t (m :: * -> *).

(Monad m) =>

([Char], t) -> m Bool

a here is always (String, String, String); however, this function is one of the few truly generic ones (it’s intended to be an implementation of msum for IO):

Inferred type: tryAll :: forall a. [IO a] -> IO a

And finally, our other truly generic function, a convenience debugging function:

Inferred type: debugIOVal :: forall b. [Char] -> IO b -> IO b

I claim that for highly specific, prototype code, GHC will usually infer fairly monomorphic types, and thus you don’t need to add very many explicit type signatures to get good errors. You may notice that MMR Hammer has almost no explicit type signatures—I argue that for monomorphic code, this is OK! Furthermore, this means that you only need to know how to use polymorphic functions, and not how to write them. (To say nothing of more advanced type trickery!)

Monads, monads, monads. I suspect a highly simplifying assumption for scripts is to avoid using any monad besides IO. For example, the following code could have been implemented using the Reader transformer on top of IO:

ldapAddEntry ldap (LDAPEntry dn attrs) = ...

ldapDeleteEntry ldap (LDAPEntry dn _ ) = ...

printAgreements ldap = ...

suspendAgreements ldap statefile = ...

restoreAgreements ldap statefile = ...

reinitAgreements ldap statefile = ...

But with only one argument being passed around, which was essentially required for any call to the API (so I would have done a bit of ask calling anyway), so using the reader transformer would have probably increased code size, as all of my LDAP code would have then needed to be lifted with liftIO.

Less monads also means less things to worry about: you don’t have to worry about mixing up monads and you can freely use error as a shorthand for bailing out on a critical error. In IO these get converted into exceptions which are propagated the usual way—because they are strings, you can’t write very robust error handling code, but hey, prototypes usually don’t have error handling. In particular, it’s good for a prototype to be brittle: to prefer to error out rather than to do some operation that may be correct but could also result in total nonsense.

Hanging lambda style also makes writing out code that uses bracketing functions very pleasant. Here are some example:

withFile statefile WriteMode $ \h ->

hPutStr h (serializeEntries replicas)

forM_ conflicts $ \(LDAPEntry dn attrs) ->

putStrLn dn

Look, no parentheses!

Reaping the benefits. Sometimes, you might try writing a program in another language for purely pedagogical purposes. But otherwise, if you know a language, and it works well for you, you won’t really want to change unless there are compelling benefits. Here are the compelling benefits of writing your code in Haskell:

When you’re interacting with the outside world, you will fairly quickly find yourself wanting some sort of concurrent execution: maybe you want to submit a query but timeout if it doesn’t come back in ten seconds, or you’d like to do several HTTP requests in parallel, or you’d like to monitor a condition until it is fulfilled and then do something else. Haskell makes doing this sort of thing ridiculously easy, and this is a rarity among languages that can also be interpreted.

Because you don’t have automatic tests, once you’ve written some code and manually verified that it works, you want it to stay working even when you’re working on some other part of the program. This is hard to guarantee if you’ve built helper functions that need to evolve: if you change a helper function API and forget to update all of its call sites, your code will compile but when you go back and try running an older codepath you’ll find you’ll have a bunch of trivial errors to fix. Static types make this go away. Seriously.

Haskell gives you really, really cheap abstraction. Things you might have written out in full back in Python because the more general version would have required higher order functions and looked ugly are extremely natural and easy in Haskell, and you truly don’t have to say very much to get a lot done. A friend of mine once complained that Haskell encouraged you to spend to much time working on abstractions; this is true, but I also believe once you’ve waded into the fields of Oleg once, you’ll have a better feel in the future for when it is and isn’t appropriate.

Rigorous NULL handling with Maybe gets you thinking about error conditions earlier. Many times, you will want to abort because you don’t want to bother dealing with that error condition, but other times you’ll want to handle things a little more gracefully, and the explicit types will always remind you when that is possible:

case mhost of

(Just host) -> do

let status = maybe "no status found" id mstatus

printf ("%-" ++ show width ++ "s : %s\n") host status

_ -> warnIO ("Malformed replication agreement at " ++ dn)

Slicing and dicing input in a completely ad hoc way is doable and concise:

let section = takeWhile (not . isPrefixOf "profile") . tail

. dropWhile (/= "profile default") $ contents

getField name = let prefix = name ++ " "

in evaluate . fromJust . stripPrefix prefix

. fromJust . find (isPrefixOf prefix)

$ section

But at the same time, it’s not too difficult to rip out this code for a real parsing library for not too many more lines of code. This an instance of a more general pattern in Haskell, which is that moving from brittle hacks to robust code is quite easy to do (see also, static type system.)

Some downsides. Adding option parsing to my script was unreasonably annoying, and after staring at cmdargs and cmdlib for a little bit, I decided to roll my own with getopt, which ended up being a nontrivial chunk of code in my script anyway. I’m not quite sure what went wrong here, but part of the issue was my really specialized taste in command line APIs (based off of Git, no less), and it wasn’t obvious how to use the higher level libraries to the effect I wanted. This is perhaps witnessed by the fact that most of the major Haskell command line applications also roll their own command parser. More on this on another post.

Using LDAP was also an interesting exercise: it was a fairly high quality library that worked, but it wasn’t comprehensive (I ended up submitting a patch to support ldap_initialize) and it wasn’t battle tested (it had no workaround for a longstanding bug between OpenLDAP and Fedora DS—more on that in another post too.) This is something that gets better with time, but until then expect to work closely with upstream for specialized libraries.

October 15, 2010This post is for those of you have always wondered why we have a forall keyword in Haskell but no exists keyword. Most of the existing tutorials on the web take a very operational viewpoint to what an existential type is, and show that placing the forall in the “right place” results in the correct behavior. I’m going to take a different approach and use the Curry-Howard isomorphism to explain the translation. Some of the logic examples are shamelessly stolen from Aaron Coble’s Logic and Proof lecture notes.

First, a little logic brush up. (Feel free to skip.)

At the very bottom of the hierarchy of logic systems lies propositional logic. Whenever you write a non-polymorphic function in Haskell, your function definition corresponds to a statement in propositional logic—this is the simply typed lambda calculus. You get some propositional symbols P, Q and R (corresponding to types) and some logical connectives $\lnot \land \lor \to \iff$. In particular, $\to$ corresponds to the function arrow ->, so you can read $P \to Q$ as the type P -> Q.

$\forall xy.\ P(x) = P(y)$

The next step up is first-order predicate logic, which allows you to use the quantifiers ∀ and ∃ on variables ranging over individuals x, y and z (the predicates take individuals and return propositions). Logical formulas in this system start to look a lot like Haskell polymorphism, but it actually corresponds to dependent types: individuals are terms, not types.

For the purpose of this post, we’ll instead have x, y and z to range over propositions (types), except for two examples of first order logic to get some intuition for quantifiers. Then polymorphic function definitions are statements in what is called propositional second order logic.

Propositional second order logic gives us a bit of rope, and we can do some fairly unintuitive things with it. Existential types are one such application. However, most Haskellers have a pretty good intuition for polymorphic functions like id :: a -> a, which actually have an ∀ quantifier at the very beginning, like id :: forall a. a -> a or $\forall x. x \to x$. What I’d like to do next is make the connection between our intuitive sense of polymorphic functions and our intuitive sense of a universal quantifier.

Consider the following English sentence: All professors can teach and do research. We can translate this into a statement in first-order logic (x ranging over individuals):

$\forall x.\ \mathrm{professor}(x) \to \mathrm{teaches}(x) \land \mathrm{researches}(x)$

The intuition for the trick of “narrowing” a universally quantified variable by placing it in an implication corresponds directly to the implicit dictionary passing that occurs when you use a type class (which also narrows a universally quantified variable).

We can do similar translations for the existential quantifier. Everybody loves somebody and there is somebody that everybody loves correspond to, respectively:

$\forall x\ \exists y\ \mathrm{loves}(x, y)$

$\exists y\ \forall x\ \mathrm{loves}(x, y)$

Take a moment to convince yourself that these are not the same statements, and figure out which direction implication goes.

We’ll now jump straight to the implication equivalences, which are the punchline, so to speak. Here, x ranges over propositions (i.e. types).

$(\exists x\ A[x]) \to B \equiv \forall x\ (A[x] \to B)$

$(\forall x\ A[x]) \to B \equiv \exists x\ (A[x] \to B)$

Consider the first equivalence: intuitively, it states that we can simulate a function that takes an existential type by using forall x. (A x -> B). This is precisely the existential data constructor:

data OpaqueBox = forall a. OpaqueBox a

which has the type forall a. (a -> OpaqueBox).

The second proposition is a little trickier to grasp: in the right to left direction, it seems clear that if there exists an inference A(x) to B for some x, if I provide all x I will get B. However, from left to right, if I provide all A(x) to get B, one of those A(x) will have to have been used but I have no good way of figuring out which one.

We can rigorously prove this equivalence with sequent calculus. We can think of these as “deduction rules” much like modus ponens (if A then B, A; therefore, B). However, statements in the sequent calculus take the form $\Gamma \vdash \Delta$, where Γ is the set of propositions which conjunctively form the assumption, and Δ is the set of propositions which disjunctively form the result. (The $\vdash$ is called a “turnstile” and indicates implication.)

$\cfrac{\Gamma \vdash A, \Delta \qquad \Sigma, B \vdash \Pi}{\Gamma, \Sigma, A\rightarrow B \vdash \Delta, \Pi} \quad ({\rightarrow }L)$ $\cfrac{\Gamma, A \vdash B, \Delta}{\Gamma \vdash A \rightarrow B, \Delta} \quad ({\rightarrow}R)$

$\cfrac{\Gamma, A[t/x] \vdash \Delta}{\Gamma, \forall x A \vdash \Delta} \quad ({\forall}L)$ $\cfrac{\Gamma \vdash A[y/x], \Delta}{\Gamma \vdash \forall x A, \Delta} \quad ({\forall}R)$

$\cfrac{\Gamma, A[y/x] \vdash \Delta}{\Gamma, \exists x A \vdash \Delta} \quad ({\exists}L)$ $\cfrac{\Gamma \vdash A[t/x], \Delta}{\Gamma \vdash \exists x A, \Delta} \quad ({\exists}R)$

$\forall L$ and $\exists R$, in particular, are quite interesting: $\forall L$ says I can make any assumed proposition “polymorphic” by picking some subterm and replacing all instances of it with a newly universally quantified variable (it’s a stronger assumption, so we’re weakening our entailment). We can indeed do this in Haskell (as one might transform (Int -> Bool) -> Int -> Bool into (a -> b) -> a -> b), so long as our proof doesn’t peek at the actual type to perform its computation. $\exists R$, on the other hand, says that I can take any resulting proposition and “hide” my work by saying something weaker: instead of A[t], I merely say there exists some x for which A[x] is true. This corresponds nicely to the intuition of an existential type hiding representation. Another nice duality is that universal quantification hides information inside the proof, while existential quantification hides information outside of the proof.

$\forall R$ and $\exists L$ don’t do as much work, but they are a little tricky to use: any universal quantification on the right side of the turnstile can create/destroy a free variable, and any existential quantification on the left side can create/destroy a free variable. Note that $\forall L$ and $\exists R$ cannot be used this way; while they can use existing free variables, they can’t create or destroy them.

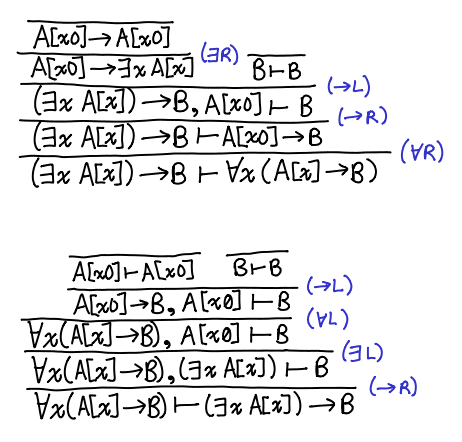

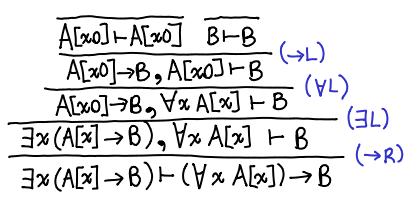

Here is the proof in both directions of the equivalence. What we’re trying to prove lives on the bottom; tautologies are at the top.

The proofs are nicely symmetrical: one uses ∀L and ∃L, and the other ∀R and ∃R. The application of the →R “uncurries” each entailment. Furthermore, the fact that both proofs are constructive indicates that there is this equivalence is one that can be witnessed by a Haskell program! You can check out a Coq version of the proof from kmc.

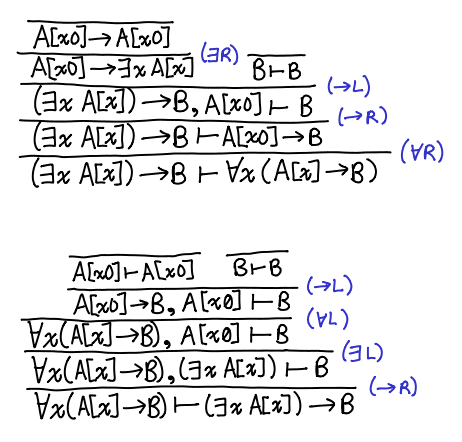

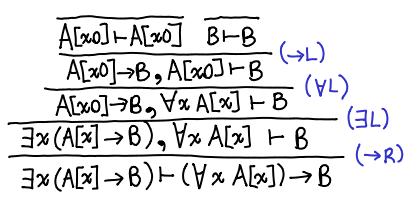

Postscript. I picked the wrong equivalence initially, but I felt it would be a shame not to share it. Here is the proof for: $\exists x\ (A[x] \to B) \vdash (\forall x\ A[x]) \to B$.

This is done entirely with intuitionistic logic: the other direction requires classical logic. This is left as an exercise for the reader, the solution is here by monochrom. There is also a version from kmc in Coq in both directions. This result has an interesting implication for existentials over functions: we can translate from an existential to a universal, but not back!

October 13, 2010Unattributed to protect the innocent. (But you can probably guess.)

“And so these poor programmers, they had to drink this much whiskey to get the job done.” [triumphantly produces a bottle of whiskey and places it on the table.] “And this group of programmers did X, and how hard was that? Two bottles of whiskey.” [places two more bottles of whiskey on the table] “But that wasn’t fast enough. And so this group of programmers did Y. Four bottles of whiskey.” [four more bottles appear] “As you can see, this is requiring an exponentially increasing amount of whiskey.”

“Locks and semaphores are Soviet-era technology.”

On the subject of optimizing algorithms by porting them to lower level hardware description languages: “There are much more productive things for you young PhD students to be doing.”

On Tycho Brahe: “Drunk. Irascible. Fat. Brilliant. Definitely a role model.”

“One of the benefits of Hindley-Milner inference is that it’s quite efficient.” “You mean EXPTIME-complete.”

“Any god-fearing man should write his type signatures.”

On the absence of let generalization in GHC 7.0: “It’s not a feature, it’s a bug with benefits.”

“You would think that explicit type signatures would make the type checker’s job easier, but actually they are making the checker’s job harder.”

“I strongly recommend this paper, but do carry along a jar of aspirin.”

October 11, 2010A complaint I once heard about SIPB is that it leans too much towards the system administration side: we proudly display the services we have deployed and neglect to talk very much about actually programming or conducting novel computer science research (despite the fact that we are very much programmers and some of us are quite research oriented.) So if you’re really not at all interested in that sort of thing (like me) you might think to yourself, “That’s very nice” and go and do something else.

I think this is a mistake, and that even a short period of time administrating a system larger than your personal laptop can be tremendously helpful for any work with computers you may do, whether it’s software engineering or computer science. System administration is advanced computer literacy, it’s a bit like knowing how to operate a complicated telescope. Sure, it has nothing to do with actually studying the stars or the fundamental nature of computation, but you’ll sure get a lot more done if you’re not fumbling with your equipment. System administration is what we do to get other things done.

“Sure,” you may say, “but any practicing software engineer or computer scientist will pick up the bits and pieces of system administration they need to know.” Yes, but I will argue that unless you actively seek out sysadmin tasks, the set of skills you acquire will be the bare minimum to get what you need done. Much like strength training, you are only actively gaining sysadmin skills when you’ve been pushed beyond what you’re ordinarily capable of. But unlike strength training, the benefits don’t go away after you’re done: you keep using the tricks you learned for everyday tasks. And what better way to push yourself further than to administrate a system for others?

To which I say, SIPB is a fantastic opportunity for this sort of work. It’s rare to be able to work on systems of this size and scope as an undergraduate: class projects don’t even come close. And if you’re a graduate student, you may be building complex systems but you may also be the only one using them, and having users is an enlightening experience (despite the fact that we sometimes complain, “Can we deprecate users?”)

Here are some personal perks that I’ve picked up from SIPB:

- I now know how to RAID two disks together, forced to learn how to do so because of standard operating procedure in our project. It’s not something that I would have gone out and done before, but now I consider it essential to any new physical server I setup. Because, for disk failure, it’s not a question of if, it’s a question of when.

- I now know how to effectively source dive code. I’ve used this to help communicate more effectively with upstream, fix bugs, add features, fill in for missing documentation, and more.

- I understand how the MIT Athena infrastructure works at a much more fundamental level.

October 8, 2010I recently sent Greg Weber an email about his xss-sanitize package, cautioning about his reuse of the pandoc sanitization algorithm for his own package. He responded (with good justification) that a mere caution was not very constructive! So here is my response, the “HTML purification manifesto,” which HTML Purifier follows and which I think is a prerequisite for any industrial grade HTML sanitization library. I will admit it’s a tough manifesto to follow, and I’ll talk about when you can get away with not following it to the line.

The manifesto.

Use semantic data structures.

Whitelist and validate everything.

Output only what all browsers understand.

Use semantic data structures. Many filters attempt to never build up a DOM or tokenized representation of their HTML during the processing stage for performance reasons. It turns out this makes securing your filter notoriously difficult. Consider the XSS cheatsheet: see how many of the vulnerabilities involve non-well-formed HTML. If you require HTML to be converted into a DOM, and then serialize it out again, you have a pretty good guarantee that the result will be well-formed, and you eliminate all of those vulnerabilities.

This must be applied to all sublanguages in HTML, and not just HTML itself. You also have to be careful that your serializer produces standards compliant output. For example, a vulnerability in HTML Purifier itself was caused by a lax adherence to standards. As it states:

HTML Purifier’s fix also percent-encodes any other reserved character in each segment of a URI. This was actually a previously identified section of relaxed standards compliance, and strictly enforcing the rules eliminated the vulnerability.

Semantic data structures are also useful for implementing extra features—for example extracting content from the document or converting all URIs into absolute form—and makes validation and manipulation tremendously easier. It will also be critical for the next step.

Whitelist and validate everything. Whitelisting is a well accepted practice, and I will not harp on it here. However, there are subtleties in its application. In order to understand the first part of the manifesto, you need to understand what everything means. At first glance, the obvious things that you might have to whitelist are elements and attributes. But if you decide to allow the href attribute, you need to make sure you whitelist URI schemes too—three things to whitelist. And heaven forbid you decide to allow style attributes!

It’s far better to take a different approach to whitelisting: every time you encounter an attribute, figure out what its sensible values are, and validate it to a whitelist. Think you don’t need to validate that height? Consider the imagecrash attack, which can bluescreen an unwitting Windows user simply by setting the width and height of an image to 999999. Complicated attribute? It may have further structure, so expand it into the appropriate semantic data structure! (The most obvious candidates are URIs and CSS strings.) You don’t necessarily have to create these structures in memory (indeed, an optimized implementation would try to avoid it), but it certainly makes coding the algorithms a lot easier. Also, it is much easier to manipulate the resulting semantic structure than it is to manipulate a bunch of strings.

This is a rather large problem to tackle, because there are so many elements and attributes in HTML and so many sublanguages embedded within those. However, you can dramatically simplify matters by creating a domain specific language to help you declaratively specify what the semantics are: though I didn’t know it at the time, this was the approach that HTML Purifier took with its HTMLModule system.

Output only what all browsers understand. I used to believe that standards compliance was enough to make sure all browsers understood your syntax (the primary target of most exploits.) Sometimes, however, browsers just don’t parse by the standards at all. Usually, the wild and woolly fields of ambiguous behavior lie outside the dictates of standards, but sometimes the standard says X should happen and Internet Explorer goes and does Y. It is tempting to throw your hands up in the air and just deal with the vulnerabilities as they come in.

But because this is an incompatibility with the standards, there are many web developers have discovered that what should work doesn’t! For CSS, even the most obscure parsing bug in Internet Explorer has been tripped over by some web developer and been documented in a wiki or encoded into a CSS hack. This knowledge, while seemingly not related to the questions of security, is critical for someone writing an HTML filter! It tells you, in particular, what the subset of standards-compliant HTML that is understood by all browsers is.

A more practical note. Whenever I see a new HTML filter pop up on the web, I apply these three litmus tests to the source code (I don’t even bother trying the demo):

- Does it use an HTML parser or attempt to do a bunch of string transformations?

- How much does it whitelist? Just elements and attributes? What contents of attributes does it treat?

- Does the filter appear to have accreted those battle scars that come from dealing with the real world?

I apply the second and third criterion less strongly depending on what the filter claims to support: for example, a filter with no support for CSS needs not worry about its attendant difficulties. In the extreme case, if you’re writing a filter for just <b> and <i>, you can get away with not following any of these recommendations and be fine. But I suspect users always want more features, and you will inevitably start adding new elements and attributes. So I also judge whether or not the source seems to have thought about extending itself in these directions.

A more linguistic note. I use the word “purification” rather than “sanitization”, because sanitization implies that the pathogens have merely been rendered inert, whereas purification implies that they have actually been removed altogether. I think that the latter philosophy is a much safer stance!

Conclusion. Back in the day, I managed to write highly inflammatory comments about other HTML filters in an overzealous attempt to promote my own. I avoid doing direct comparisons these days; instead, I hope this manifesto is helpful to people interested in writing or improving their own filters.

October 6, 2010Hoare logic, despite its mathematical sounding name, is actually a quite practical way of reasoning about programs that most software engineers subconsciously employ in the form of preconditions and postconditions. It explicitly axiomatizes things that are common sense to a programmer: for example, a NOP should not change any conditions, or if a line of code has a postcondition that another line of code has as its precondition, those lines of code can be executed one after another and the inner precondition-postcondition pair ignored. Even if you never actually write out the derivation chains, you’re informally applying Hoare logic when you are trying to review code that uses preconditions and postconditions. Hoare logic is an abstraction that lets us rigorously talk about any imperative language with the same set of rules.

At my very first Semantics lunch, I was treated to a talk by Tony Hoare entitled Abstract Separation Algebra. Unhappy with the fact that the separation logic requires you to talk about three things: the precondition, the actual code, and the postcondition, Tony Hoare re-encoded the scheme as vanilla abstract algebraic structures. While his slides all seemed plausible my abstract algebra wasn’t facile enough to be able to verify all of his results as they flashed by. Accordingly, I’m not going to talk too much about the actual reencoding (I need to work it out myself when I get some free time, ha!). However, I caught a whiff of the underlying thought process that was driving this reencoding, which I thought was a fascinating way of thinking: take an axiom X of your abstraction that you would like to be true, and with a more fundamental pool of axioms (in this case, the usual axioms of abstract algebra) figure out which ones are necessary to make axiom X logically true. This is mind twisting in several ways, not the least of which is that you end up with a set of primitives for which you may not even have a concrete model for!

I will admit, I am a little afraid that the mathematicians in the audience will think, “Well, of course that’s how you pick your set of axioms! And pff, concrete models are for the weak.” (After all, it was said in the talk, “This is abstract algebra, so you should be willing to accept these axioms without having any model in mind.”) But given the questions asked during the talk, among them “so what intuitively does ★ mean?” (for which there was no answer) I think I am justified in claiming that for some of us mortals, this is a rather strange way of thinking about things.

For example, consider the first example that Hoare gave: let (C, ;, ⊑) be a ordered semigroup (that is, a carrier set C equipped an associative binary operation ; that is monotonic with respect to a partial ordering relation ⊑.) Then, we can define the Hoare triple {p} q {r} as the relation p ; q ⊑ r. I immediately want to know: what does C represent (it appears to contain postconditions, lines of code, and preconditions: as Simon Peyton Jones pointed out, it doesn’t seem to distinguish these two types of code) and what does the relation ⊑ represent? But these are rather unimportant questions, since if we shake this algebra-tree, the fruit of sequential composition drops down by monotonicity:

$p ; q \sqsubseteq r \land r ; q’ \sqsubseteq s \Rightarrow p ; q ; q’ \sqsubseteq s$

$\p\ q \r\ \land \r\q’\s\ \Rightarrow \p\ q ; q’ \s\$

Alas, the humble ordered group isn’t enough to encode all of the things we need, so Hoare ends up bringing monoidal lattices and special operators that obey the abides law in order to get the “Separation Algebra”:

A separation algebra is the tuple (C, ⊑, ;, ★, ε, ⊔) where (C, ⊑, ;, ε, ⊔) forms a monoidal lattice and the law (p ★ q) ; (p’ ★ q’) ⊑ (p ; p’) ★ (q ; q’) is fulfilled.

As he admits, he is cheating slightly: he is bringing in precisely the algebraic properties he needs in order to get the properties he wants. But what makes the result notable is the fact that all he needed were the well-studied structures of vanilla abstract algebra. Consequently, when we manipulate our newly encoded Hoare triples, we can rely on the good old techniques of abstract algebra (which, for those of us who never took abstract algebra, may not be “good” or “old.”)

So what do ⊑, ★, ε and ⊔ mean? Because of how we’ve constructed our algebraic structure, we don’t necessarily know! (Although, I’m sure some concrete model is out there just waiting to be discovered. Maybe it’s been discovered already.) Perhaps this is all just an illustration of the category theory mantra: “The self is unimportant: only its relation to others.” A bit zen, if you ask me.

Postscript. There was a nice comment about how the duality between the implementation and specification algebras resembled the Stern dualities: very roughly, that two points determine a line, but a line determines an infinite number of points. I went and searched for the term but couldn’t find any literature about this, so perhaps some pointers would be appreciated.

October 4, 2010I am studying computer science this academic year at Cambridge University, not MIT. For some people, this seems quite strange: when I tell old friends at MIT and new acquaintances at Cambridge about the fact that I am a Cambridge-MIT Exchange student, they say, “Why?” Sometimes, it’s some disbelief at the fact that I am choosing to leave the familiar social circles and situations that mark MIT. Other times, it’s some disbelief that I would want to study computer science at Cambridge rather than MIT (“Just joking,” they add, though I’m not necessarily sure I believe them.)

I would like to explain why, in terms of both before leaving for Cambridge and also how my perspectives have changed after a mere three days in the college. This is a post for future students considering CME, my parents who anxiously want to know how I am doing at Cambridge, and for everyone who may at some point have asked me why. Oh, and there might be something about the functional programming research in Cambridge. wink

Foreign exchange program was always something I had vague intentions of doing while applying to colleges. When you haven’t done sufficient thinking about a subject, or haven’t experienced enough to really have enough data, your ideas are molded by platitudes and truisms like “it will be a good experience” and “you’ll learn more about the world than just the US.” It’s a bit like describing a hurricane as ‘wet’; it’s vacuously true and not very useful. My impressions about what college life might be like were similarly vague.

MIT swept away those preconceptions and made me understand what my college life would be like. And, to tell the truth, I liked it. MIT is busy—it doesn’t give much time for introspection—but when I was able to step away from it all for just a moment and see myself talking about how chroots work while walking home from the Stata center, or working out with a person I really respect and admire how to encode the Futamura projections in Haskell, or finding myself in control of a fictional economy in a live-action role playing game, or staying up all night in a room with my friends talking about relationships until the sun pokes through the window… it makes you fiercely loyal to the place. By the end of my freshman year summer, I was incredibly ready to return to MIT.

But MIT is a slightly pathological environment (perhaps that’s how it attracts the people it does.) And one of the things it is able to do is make you tired. Not just from missing sleep: it also stems from things like having been up for classes since 10AM and having had not more than hour to yourself before some other class or activity demanded your time. Though I have never definitively burned out (usually a weekend of marathoning some TV show is enough to cure me), I see many of my friends constantly sparring with (and occasionally succumbing to) the beast. But I found myself feeling tired, and when the information session for the CME program came up, it seemed extremely timely.

I was not really alone in feeling this way; I saw some familiar faces at the information session, and they expressed similar feelings. And some of these people, in the end, decided not to do the program, because they just couldn’t bring themselves to leave MIT. I suspect that just “feeling tired” would not have been enough to make me go to Cambridge. But the topic had brought back a memory of the summer of my Junior year that became a driving force.

This was the possibility of an alternate reality Edward; one who had not gone to a university where computer scientists were not spilling out of the walls and my social groups were not almost entirely composed of people who, in some way or another, were studying science or engineering. A universe where Edward had gone to a liberal arts university where people did not constantly boast about how “hosed” they were. Post-MIT, it would have been impossible to imagine, except for the fact that I had attended a little program called Governor’s School for the Arts and put away my computer for my oboe and hanged out in the room of a creative writer with artists, actors and other musicians. It was just a month: a mere taste. But it was a taste I never forgot.

It was enough to make me seriously consider the possibility, and let my parents know about it. They strongly approved, which pushed me even further. I decided I’d write the requisite essay and see if they’d admit me. They did.

There are not many major decisions in my life that I have agonized over. This one was a whisper in the cacophony of MIT life. I accepted, and it was a simple, plain fact, with no real impact on my life. MIT was still MIT, my life was still ordinary, life moved on.

My father had always bugged me to “have lunch with every computer science professor at MIT.” I went as far to compile a list of all of the professors and their rooms, so that I could track them down in person. But I never did it: I could never figure out what we would talk about, and in that case, it seemed like a waste of both my time and the professor’s time, unless he was feeling in the mood for doing a bit of advocacy for his work.

Summer at Galois set my decision that I wanted to pursue functional programming in some shape or form as a computer scientist. Once I figured out what it was I actually doing, it was as if my eyes were opened. I learned about research groups at MIT that I had never heard about before, I suddenly discovered that I had a small kernel of research ideas that I could grow as I read and learned more about the computer science research. I had not thought at all about the academics of Cambridge (such a famous university ought to have a sufficient computer science department.)

Now I looked, and I was quite pleased to discover that Cambridge University seemed to be more in line with these research interests than MIT was. The basic undergraduate curriculum covers such topics as denotational semantics, types and Hoare logic. Simon Peyton Jones and Simon Marlow, my two idols, work at Microsoft Research Cambridge. It’s right next to the Cambridge Computer Lab: I dropped by just to squee at the location before heading back home. Several research groups work in areas that feed into functional programming: the Automated Reasoning group holds lunches with talks every week. Glasgow, which was the epicenter of the functional programming movement a few decades ago, is within striking distance.

I believe in the ability for individuals to shape their destiny, but I don’t really believe people know what they’re shaping it to: they just make adjustments when things happen that they don’t like. And sometimes it takes a giant irrational change to move you out of the local optimum. My reasons for going to Cambridge are irrational: how do I know if Cambridge will be less stressful than MIT or if I will actually restore parts of that alternate universe Edward. It’s entirely up to me whether or not I take advantage of the resources around me in Cambridge (I haven’t yet, but it’s only four days in…) But here I am. Nothing has happened yet. But everything is in place. And I am optimistic.